GPU Servers for Generative AI in SMEs

Maximum Performance. Full Control.

While public cloud services may seem convenient at first glance, medium-sized companies often pay the price later through rising, unpredictable costs and limited control over their data as usage scales. This is exactly where dedicated GPU servers come in: they bring the computing power of modern AI models directly into your data center or server room – secure, high-performing, and predictable.

Nelpx GmbH develops and delivers customized GPU and HPC servers specifically tailored to the requirements of medium-sized businesses – available fully water-cooled upon request, for maximum performance with minimal noise and optimal energy efficiency.

Call to Action: Sprechen Sie mit unseren Experten und erhalten Sie ein GPU‑Server‑Konzept, das exakt zu Ihren KI‑Use‑Cases und Ihrem Budget passt.

Advantages of Dedicated GPU Servers for SMEs

With your own GPU server, you create the foundation to operate generative AI productively and economically within your company – from text and code generation to image, video, and speech models.

Predictable Costs instead of the Cloud Trap

Invest once, benefit long-term: Instead of ever-increasing cloud bills, you utilize predictable CapEx investments and significantly reduce your ongoing OpEx costs – especially for permanent AI workloads.

Full Data Sovereignty and Compliance

Your sensitive data remains within your network: This makes it easier to meet compliance requirements (e.g., GDPR, customer contracts, industry regulations) and minimizes legal risks associated with external data storage.

Predictable Performance without Shared Resources

No shared GPU resources, no "noisy neighbors" taking away your capacity: Your workloads receive consistently high performance, shortening training times and keeping inference latencies stable.

Optimized for Your Use Cases instead of Off-the-Shelf

Whether you are building internal chatbots, Copilot functions for employees, image generation, or code assistance – your hardware is dimensioned to optimally meet your specific use cases.

Technological Independence

With your own infrastructure, you avoid dependency on individual hyperscalers and their pricing models. You retain the freedom to choose the tools, frameworks, and models that truly suit your needs.

Typical Generative AI Use Cases in SMEs

Many medium-sized companies face similar challenges: processes are fragmented, expertise is locked in minds or documents, and customers expect faster answers. Generative AI can become a productivity booster here:

Internal AI Assistants for specialist departments (Sales, Service, HR, Purchasing, Production)

Document and Knowledge Chatbots that answer questions regarding internal manuals, contracts, and technical documentation

Code Assistance and Automation in internal software development

Marketing and Content Generation (product descriptions, campaign drafts, social media ideas)

Image and Video Generation for product visualization, prototyping, or training content

Speech Use Cases such as transcription, meeting summaries, and voice bots

With a dedicated GPU server, you can introduce these use cases step-by-step – starting as a pilot, then expanding into a productive service for entire departments or locations.

On-Prem instead of Pure Cloud: The Ideal Hybrid Strategy

The future often lies not in "either/or" but in "both/and." Many SMEs perform best with a hybrid model: short-term experiments and tests in the cloud, while productive and sensitive workloads run on their own GPU servers.

Tests and Proof-of-Concepts in the cloud

Productive AI services for employees and customers on dedicated GPU servers

Sensitive data remains in your own network

Cost-intensive continuous workloads (e.g., Chatbots, Copilots) run locally

Integration into your existing IT landscape (AD, IAM, monitoring, backup)

Nelpx supports you in building this hybrid architecture so that you can leverage the advantages of both worlds while keeping costs, security, and complexity under control.

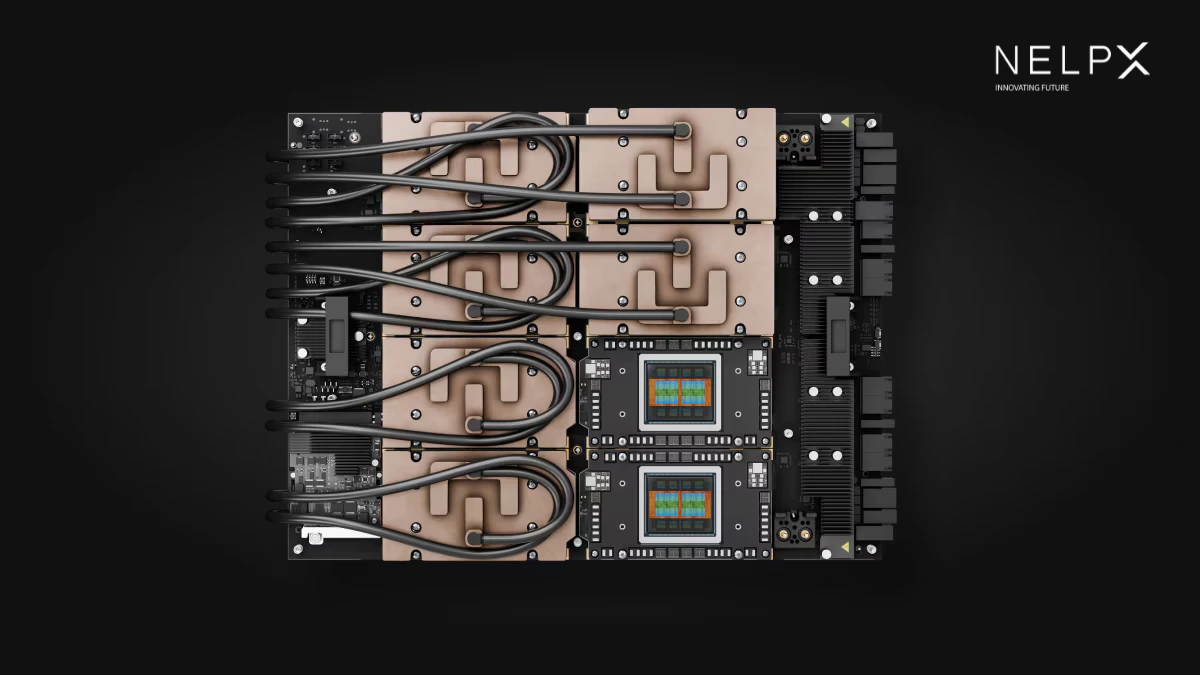

Water-Cooled GPU Servers: Maximum Performance, Minimum Problems

Classic air-cooled servers quickly reach their limits with high-density GPU systems. Noise, waste heat, thermal throttling, and high energy consumption are common issues. Water-cooled GPU servers solve exactly these points.

Your advantages with water-cooled systems:

Stable Peak Performance:

Water-cooled GPUs run permanently within the optimal temperature range without thermal throttling. This means consistently high clock frequencies and maximum computing power – even under full load.

More Power in Less Space:

Higher power density per rack unit allows you to fit more GPUs and more computing power into a smaller space without turning your server room into a sauna.

Reduced Noise Levels:

Fewer or slower fans significantly reduce noise pollution – a crucial factor if server rooms are located near workspaces.

Better Energy Efficiency:

Efficient cooling reduces energy costs and makes it easier to achieve sustainability goals and internal Green IT strategies.

Hardware Longevity:

Uniform temperatures protect components and increase the lifespan of your investment.

Nelpx plans and delivers fully water-cooled HPC and GPU server solutions upon request – including the planning of cooling circuits and coordination with your spatial conditions.

Typical GPU Server Profiles for Generative AI

Every SME has different requirements. Instead of a one-size-fits-all product, Nelpx provides specific configurations based on your use cases. Examples:

1. Compact Inference Server for Internal AI Assistance

Ideal for: Internal chatbots, document Q&A, light image and text use cases.

1–2 GPUs (e.g., current generation NVIDIA data center GPUs)

High RAM capacity for multiple parallel sessions

Fast NVMe SSDs for model and embedding storage

Designed for low latency and high availability

2. Performance Cluster for Multiple Teams and Locations

Ideal for: Parallel use by different departments, multiple LLM instances, combination of inference and light fine-tuning.

4–8 GPUs per node, scalable across multiple nodes

High-speed networking (e.g., 100 GbE or faster) for rapid model and data access

Separate resource pools for different departments or projects

Optional water cooling for continuous high utilization

3. HPC System for Demanding AI Workloads and Training

Ideal for: Fine-tuning larger models, complex AI pipelines, combination of classic HPC and AI.

8+ GPUs with high GPU-to-GPU throughput

Large RAM configuration, fast parallel storage

Optimized for batch training, long runs, and sophisticated experiments

Fully water-cooled for maximum stability under constant load

Seamless Integration into Your Existing IT Landscape

A GPU server is only valuable if it integrates smoothly into your existing IT. That’s why Nelpx ensures that technology does not become a foreign body, but feels like a natural part of your environment.

Connection to existing identity and authorization systems (e.g., Active Directory, SSO)

Integration into your monitoring (e.g., existing monitoring tools, log systems)

Integration into backup and recovery concepts

Network connection according to your security specifications (segmentation, firewalls, DMZ concepts)

Support for common frameworks and runtimes (e.g., PyTorch, TensorFlow, Open-Source LLMs, container environments)

Focus on Security, Governance, and Compliance

In the SME sector, trust is essential – both internally and externally. When employees work with AI systems, it must be clear that data is treated confidentially and processed securely.

Data processing in your own data center or server room

Clear access concepts: Who is allowed to use which models, prompts, and data?

Separation of testing and production environments

Logging of relevant activities for auditing and compliance requirements

Support in defining guidelines for the use of generative AI in the company

Methodology: How GPU Projects Work with Nelpx

To ensure you achieve productive results quickly, every project at Nelpx follows a structured yet pragmatic process:

Use Case Analysis:

Together, we clarify which generative AI applications you plan for the short, medium, and long term and which data is relevant.

Capacity and Architecture Planning:

Based on your requirements, we define performance goals, GPU count, storage needs, network connectivity, and cooling concepts (air or water).

Individual System Design:

We create a server design tailored to your needs, including specific components, expansion paths, and integration into your infrastructure.

Quote and Fine Specification:

You receive a transparent quote including variants, allowing you to clearly evaluate investment, runtime, and expansion options.

Delivery, Installation, and Commissioning:

Upon request, we accompany the entire commissioning process – from rack installation and software stack setup to the first test workloads.

Optimization and Support:

After go-live, we assist with fine-tuning, monitoring, capacity planning, and future expansions.

Who Benefits Most from Nelpx GPU Servers?

Our GPU and HPC servers are particularly interesting for medium-sized companies that:

Want to use generative AI strategically rather than just testing individual tools.

Must or want to keep sensitive data and knowledge in-house.

Value predictable costs and clear ROI arguments.

Need to supply multiple locations or teams with central AI infrastructure.

Are already experiencing performance bottlenecks with cloud solutions or classic servers.

Take sustainability and energy efficiency (e.g., through modern cooling concepts) seriously.

Your Next Step: Toward Your Own AI Infrastructure with Nelpx

Do you want to turn generative AI from an experiment into a productive corporate resource – backed by an infrastructure that fits your SME business? Then now is the right time to lay the foundation.